Environmental data in R

RStudio project

Open the RStudio project that we created in the first session. I recommend to use this RStudio project for the entire course and within the RStudio project create separate R scripts for each session.

- Create a new empty R script by going to the tab “File”, select “New File” and then “R script”

- In the new R script, type

# Session 4: Environmental data in Rand save the file in your folder “scripts” within your project folder, e.g. as “4_EnvData.R”

In ecosystem and biodiversity modelling, we often aim to understand how environment is shaping ecosystems and biodiversity patterns. Thus, additional to our biodiversity data we need environmental information. Many data are now available at very high spatial resolution, e.g. lidar data. However, often, high resolution data are not necessarily available globally - although the data are constantly improving. I can’t give you a full overview over all available data sets. Rather, you should get an idea how you process the data to make best use of them for your ecosystem and biodiversity models.

1 Climate data

The raster package is offering direct access to some

standard repositories; see the help pages ?getData.

library(raster)## Lade nötiges Paket: spWe have already used this for extracting climate data from the worldclim database (http://worldclim.org/). Please note that there are newer climate data sets out, e.g. the Chelsa climatologies (http://chelsa-climate.org/) that are preferable in many cases (Karger et al. 2017).

We download the 19 bioclimatic variables at a 10’ resolution

(res=10). The variables are indicated by

var="bio". For an explanation of the 19 bioclimatic see

here: https://www.worldclim.org/data/bioclim.html. (Note that

Chelsa climatologies offer the same set of 19 bioclim variables.) Other

valid variable names are ‘tmin’, ‘tmax’ and ‘prec’. See the

?getData help pages for more information.

clim <- getData("worldclim", var="bio", res=10, download=T, path="data")

# Now, let's look at the data:

clim

# Can you explain, what a raster stack is?

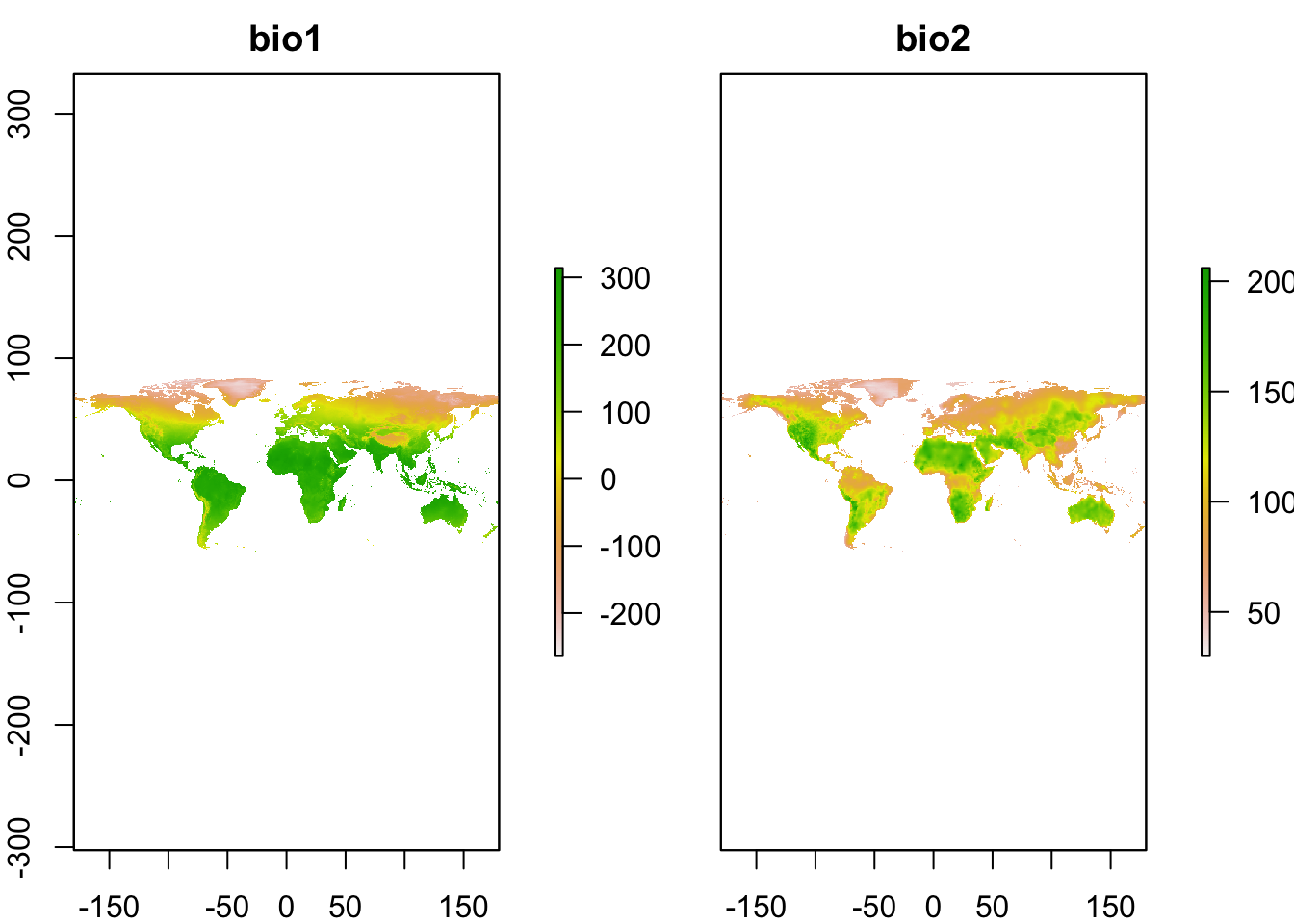

plot(clim[[1:2]])## class : RasterStack

## dimensions : 900, 2160, 1944000, 19 (nrow, ncol, ncell, nlayers)

## resolution : 0.1666667, 0.1666667 (x, y)

## extent : -180, 180, -60, 90 (xmin, xmax, ymin, ymax)

## crs : +proj=longlat +datum=WGS84 +no_defs

## names : bio1, bio2, bio3, bio4, bio5, bio6, bio7, bio8, bio9, bio10, bio11, bio12, bio13, bio14, bio15, ...

## min values : -269, 9, 8, 72, -59, -547, 53, -251, -450, -97, -488, 0, 0, 0, 0, ...

## max values : 314, 211, 95, 22673, 489, 258, 725, 375, 364, 380, 289, 9916, 2088, 652, 261, ...

1.1 Climate scenarios

The Chelsa and worldclim data bases also offer downscaled climate

scenarios. The scenarios stem from the World Climate Research Programme

Coupled Model Intercomparison Projects (CMIPs). The most recent is the

CMIP6 and the corresponding scenarios can be downloaded form the Chelsa

or worlclim websites. The built-in raster package function

getData() only provides access to the CMIP5 scenarios but

which we will use here for convenience. As argument for the dataset name

we provide CMIP5. Additionally, we have to indicate which

model (global circulation model, GCM) we want to download,

which rcp (representative concentration pathway, RCP) and

which year (projection period; 2050 = average for

2041-2060, 2070 = average for 2061-2080). More information on the model

abbreviations and the available RCPs can be found here: https://worldclim.org/data/cmip5_10m.html. As above, we

have to provide var and res arguments as

well.

# Please note that you have to set download=T if you haven't downloaded the data before:

clim_fut <- getData('CMIP5', var='bio', download=F, res=10,

rcp=45, model='NO', year=50, path=my_filepath)

# Rename to hold the same bioclim names as the current climate layers

names(clim_fut) <- names(clim)

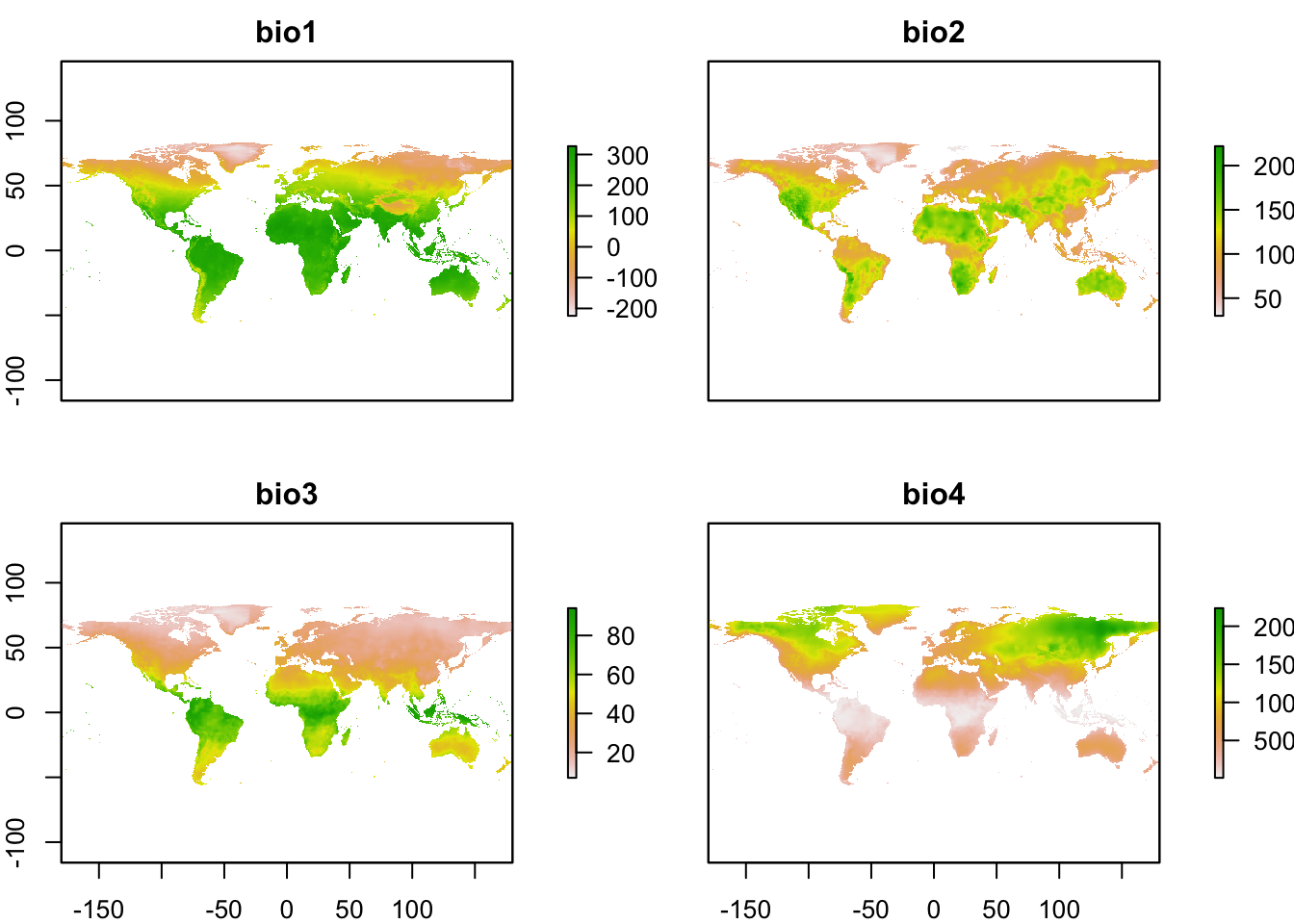

# Plot climate layers (only the first 4 layers for simplicity)

plot(clim_fut[[1:4]])

Exercise:

- Take a look at available scenarios and download data for two other GCMs, RCPs, and time periods: https://worldclim.org/data/cmip5_10m.html

2 Land cover data

We use the Corine Land Cover (CLC) data coordinated by the European

Environment Agency (EEA). Data for Europe are available through the

online portal: https://land.copernicus.eu/pan-european/corine-land-cover/.

The data are free of use but you have to register in order to proceed to

downloading. CLC products are based on photointerpretation of satellite

images and are available for different time slices since 1990. CLC

provide information on the main land cover/use type at 100m spatial

resolution, distinguishing 44 different land cover/use types. The data

are provided as GeoTIFF, which can be easily read in using the

raster() command.

An alternative dataset at the global scale is the Collection 2 of the Copernicus Global Land Cover layers (Buchhorn, Lesiv, et al. 2020), e.g. available for the year 2019 through https://doi.org/10.5281/zenodo.3939050 (Buchhorn, Smets, et al. 2020). Data are available at 100m spatial resolution, offering fractional cover of the main land cover types as well as a discrete map of the main land cover type.

Course participants can download the CLC data for Germany directly from moodle. Please place the GeoTIFF file (and its auxiliary file aux.xml) in your data folder.

# Read in the CLC data for Germany:

corine2018 <- raster('data/CLC2018_DE.tif')

# Read in the legend:

legend <- read.table('data/CLC2018_CLC2018_V2018_20_QGIS.txt',sep=',') # columns 2-4 indicate the RGB colours for reproducing the standard CLC colour maps. Column 6 provides the land use/cover classes.

legend[,6]## [1] "Continuous urban fabric"

## [2] "Discontinuous urban fabric"

## [3] "Industrial or commercial units"

## [4] "Road and rail networks and associated land"

## [5] "Port areas"

## [6] "Airports"

## [7] "Mineral extraction sites"

## [8] "Dump sites"

## [9] "Construction sites"

## [10] "Green urban areas"

## [11] "Sport and leisure facilities"

## [12] "Non-irrigated arable land"

## [13] "Permanently irrigated land"

## [14] "Rice fields"

## [15] "Vineyards"

## [16] "Fruit trees and berry plantations"

## [17] "Olive groves"

## [18] "Pastures"

## [19] "Annual crops associated with permanent crops"

## [20] "Complex cultivation patterns"

## [21] "Land principally occupied by agriculture with significant areas of natural vegetation"

## [22] "Agro-forestry areas"

## [23] "Broad-leaved forest"

## [24] "Coniferous forest"

## [25] "Mixed forest"

## [26] "Natural grasslands"

## [27] "Moors and heathland"

## [28] "Sclerophyllous vegetation"

## [29] "Transitional woodland-shrub"

## [30] "Beaches dunes sands"

## [31] "Bare rocks"

## [32] "Sparsely vegetated areas"

## [33] "Burnt areas"

## [34] "Glaciers and perpetual snow"

## [35] "Inland marshes"

## [36] "Peat bogs"

## [37] "Salt marshes"

## [38] "Salines"

## [39] "Intertidal flats"

## [40] "Water courses"

## [41] "Water bodies"

## [42] "Coastal lagoons"

## [43] "Estuaries"

## [44] "Sea and ocean"

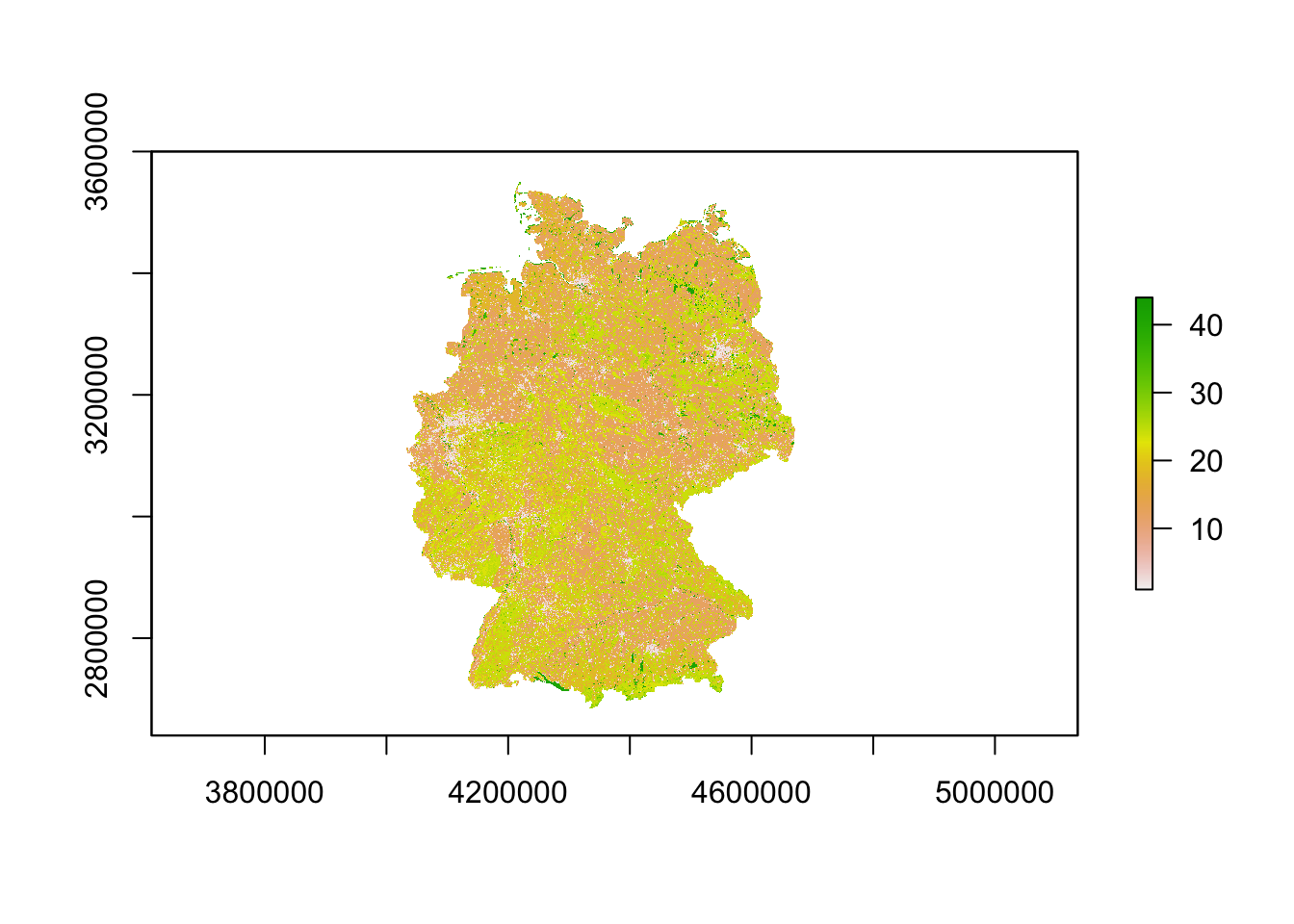

## [45] "NODATA"# Plot the CLC map

plot(corine2018)

For many modelling applications, we might actually be more interested in proportional land cover, and potentially at a coarser resolution. We can easily achieve this by aggregating the data to the desired coarser resolution, e.g. 1km. We can then express the proportional cover at 1km (or any coarser than the original) resolution as the relative number of 100m cells within the coarser cells with a particular land cover class.

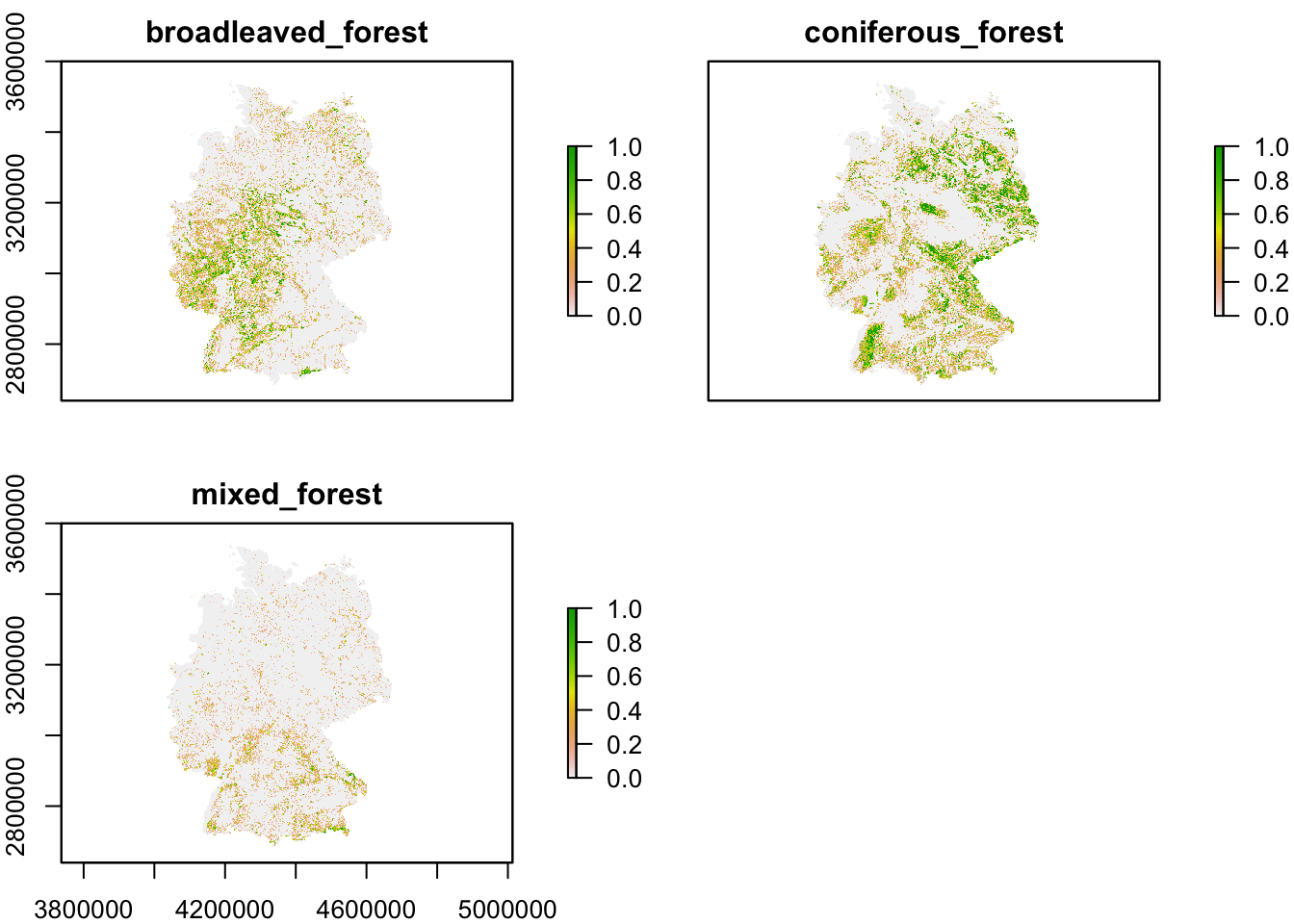

For example, let’s calculate the proportional cover of broad-leaved, coniferous and mixed forest (CLC classes 23-25), respectively, at a 1 km resolution.

# Number of cells in x/y direction that will be aggregated

aggregation_factor <- 10

# Calculate proportional cover for class 23 (broad-leaved forest) at 1km resolution

corine2018_1km <- aggregate(corine2018,aggregation_factor,

fun=function(x,...){ sum(x==23)/(aggregation_factor^2) })

# Calculate proportional cover for class 24 (Coniferous forest) and add to raster stack

corine2018_1km <- addLayer(corine2018_1km,

aggregate(corine2018,aggregation_factor,

fun=function(x,...){ sum(x==24)/(aggregation_factor^2) }))

# Calculate proportional cover for class 25 (Coniferous forest) and add to raster stack

corine2018_1km <- addLayer(corine2018_1km,

aggregate(corine2018,aggregation_factor,

fun=function(x,...){ sum(x==25)/(aggregation_factor^2) }))

# Add names to layers

names(corine2018_1km) <- c('broadleaved_forest','coniferous_forest','mixed_forest')

# Plot the proportional covers

plot(corine2018_1km)

# And don't forget to save the resulting raster

writeRaster(corine2018_1km, "data/corine2018_forestcover_1km.grd")Exercise:

- Pick a different spatial resolution (e.g. 5km or 10km) and calculate proportional cover of different agricultural land cover/use classes

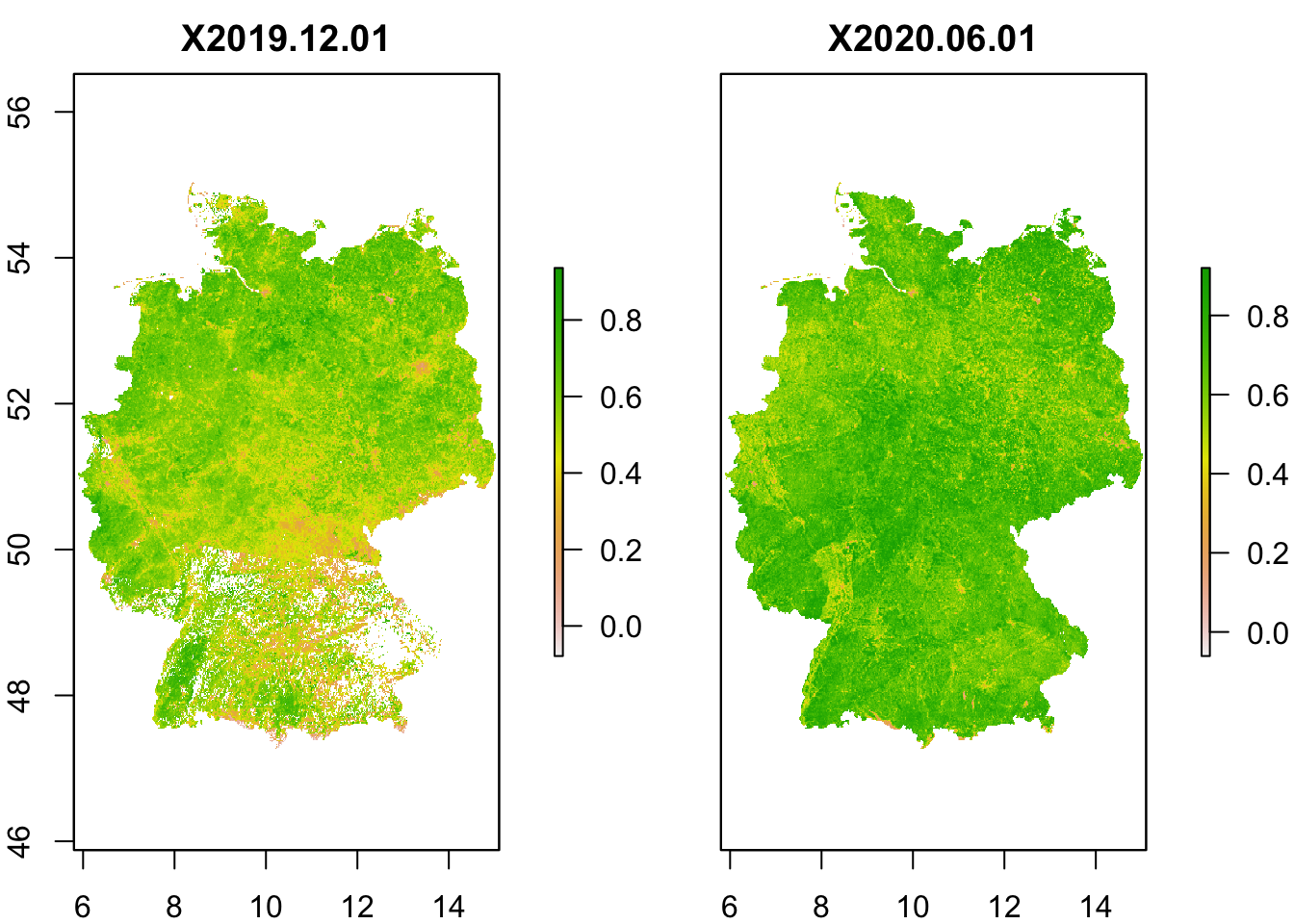

3 Remote sensing data

A lot of land cover products are in essence derived from remote sensing data. But there are also other indicators that can be derived from remote sensing information, for example greenness. One such indicator is the Normalised Difference Vegetation Index (NDVI), which is derived from the spectral reflectance measurements acquired in the red (visible) and near-infrared wavebands. Healthy plants absorb red light and reflect near-infrared leading to strong differences in the reflectance of these wavebands. For living plants NDVI should always be positive, while for dead plants or rock or water surfaces NDVI will be negative. Healthy, dense canopy vegetation will have NDVI above 0.5. (These are just rough interpretations, please read up more on NDVI for exact details.)

NDVI data from 2014 onwards can be downloaded from Copernicus Global Land Service (CGLS, https://land.copernicus.eu/global/products/ndvi). The data are available at 300m or 1km resolution every two weeks. Older NDVI data for 1985-2015 are available as product of NOAA Global Inventory Monitoring and Modeling System (GIMMS) from the Big Earth Data Platform, with 8km resolution and images every two weeks.

I will not go into detail of how to download the NDVI data. The data are typically stored in NetCDF format which can also be read into R. I have already downloaded 1km NDVI data from the CGLS database for two time slices (01/12/2019 and 01/06/2020) and extracted the data for Germany. Course participants can download the raster stacks from moodle (please put it into your data folder).

# Read in raster stack

ndvi <- stack('data/NDVI_1km_DE.grd')

# plot NDVI layers

plot(ndvi)

Exercise:

- Plot the difference in summer and winter NDVI values. Where do we observe largest difference in greenness between summer and winter? Interpret.